Cloud procurement platform built with AI-driven development in under 8 weeks

Platform development

Cloud procurement / SaaS

Python, FastAPI, Vertex AI, Claude Code, FlowForge

2024

United Kingdom

January 2025 - Present

3

Executive Summary

Impressit built Canopy's AI-powered cloud procurement platform from scratch — and delivered it twice as fast as the industry standard, using Claude Code and FlowForge as the primary development approach.

Key metrics:

- ×2 faster — development speed compared to a conventional approach

- 8 weeks — from kickoff to the platform release

Client Context

Canopy is a UK-based cloud broker. The company helps enterprise clients find the right cloud provider — based on price, features, and reliability — and negotiates better terms on their behalf.The market opportunity is clear: dozens of alternatives to AWS, Google Cloud, and Azure exist — regional providers, AI-optimized infrastructure, niche platforms. A $100,000/month Amazon bill can often be cut in half with the right alternative. Most companies never find those alternatives. Providers' own comparison content is written to favor themselves.Canopy solves this as a Cloud Agnostic platform: no provider preferences, transparent data, independent recommendations. Partnership agreements with providers allow Canopy to offer prices below retail — including a discount on Amazon unavailable when going direct.

Before the platform, Canopy operated entirely offline: manual consultations, one deal at a time. That model doesn't scale. Canopy couldn't serve the self-serve segment — companies spending under $30K/month on cloud — and remained limited to high-touch enterprise work. The platform was built to cover all five customer segments: startups (1–10 people), small business (up to 50), mid-market (up to 200), upper mid-market (up to 1,000), and enterprise. Smaller segments get a fully self-serve online experience. Enterprise accounts get sales team involvement alongside the platform.

Business Problem

Canopy had a clear product vision and deep market knowledge — but no internal engineering team. Their Chief Technical Product Office set the direction. Impressit owned everything else.

The team faced four interconnected challenges.

- No codebase, no legacy. That gave the team full freedom in technical decisions — and full accountability for them. A wrong call early would mean costly rework down the line. The foundation had to be right the first time.

- One platform. Fifty providers. Three data inputs. Invoice upload, form, and AI chat each required a different approach to processing the same input. The solution: integration with Cloud Mercato's GraphQL API as the central data layer. Impressit normalized all provider data into a single consistent structure on top of it.

- AI in Production, Not in a Prototype. Parsing cloud invoices with Claude Opus is not a demo feature. Invoices have arbitrary structures that vary by provider and don't yield to standard document parsing. The model needs to understand cloud service context — not just extract text — and structure the output reliably under real load. Canopy's priority was large invoices: 35+ pages, where the most significant costs are concentrated. At that volume, Sonnet returns incomplete data and lower extraction quality. Opus handles the full scope. That determined the model choice from day one.

- Fast Delivery with a Lean Team. Three specialists had to cover the full stack: fulls-stack developer, DevOps, and AI integration. Expanding the team wasn't on the table. That meant one thing: find a way to meaningfully increase each engineer's output — or fall short of the client's expectations.

Solution Overview

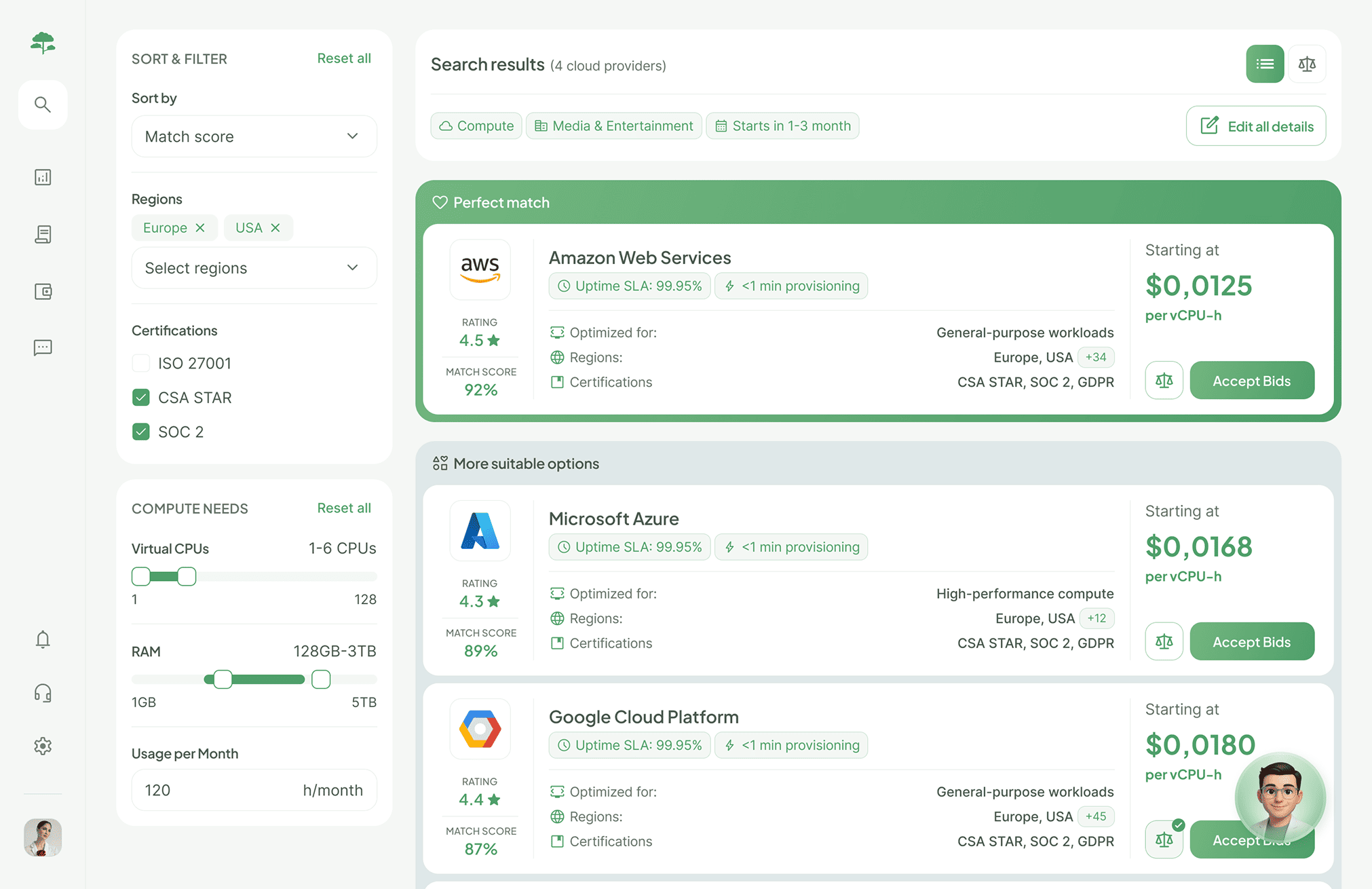

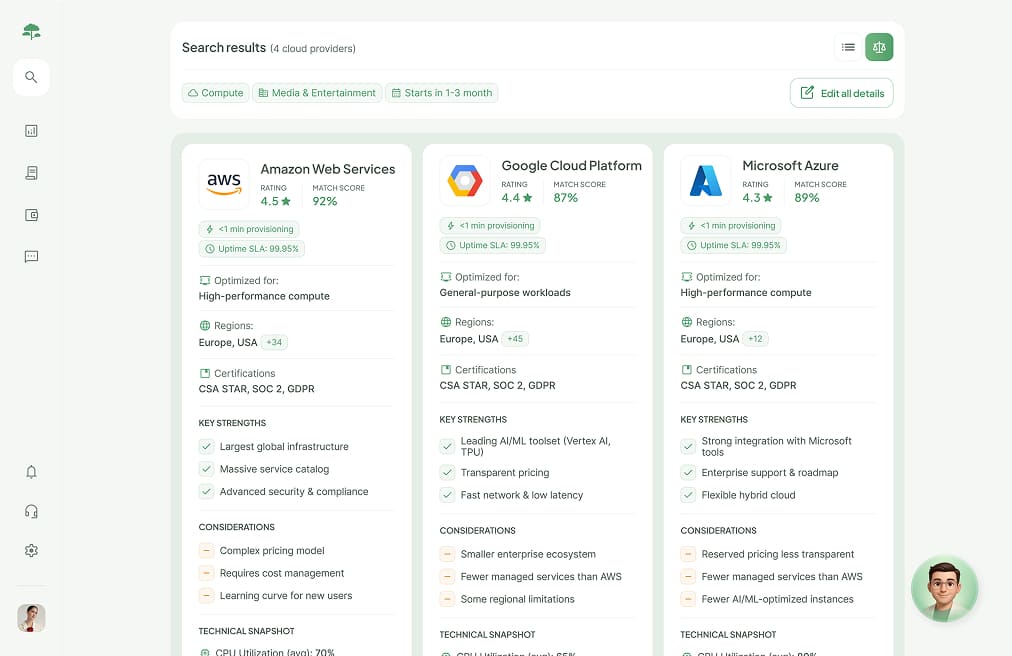

Impressit has built Canopy's web platform for automated cloud provider comparison. The Canopy platform is Cloud Agnostic — no provider preferences, transparent data based on each customer's actual needs.

There are three scenarios for provider searching:

- Invoice upload. The user uploads their current monthly cloud bill. The platform parses it using Claude Opus via Vertex AI on Google Cloud, identifies which services are in use and at what cost, and matches alternatives across ~50 providers. Output: a comparison with prices and specs.

- Basic search. For users without an invoice or unwilling to share one. A multi-step form captures infrastructure requirements. The system runs the match without AI logic.

- AI search. A chat interface replaces the form. Claude via Vertex AI collects the same data through natural conversation. Model choice — Opus or Sonnet — will be determined through testing.

Key integrations with Claude:

- Google Cloud Vertex AI → Claude Opus (Anthropic) — invoice parsing and AI chat

- Atlassian MCP — project management

Our Approach

Impressit took full ownership of the technical cycle — from architecture to deployment. The three-person team is divided into clear ownership zones: platform admin, parsing and matching algorithms, analytics and CMS. Each zone was divided into responsibility areas within the team.

The defining characteristic of the Impressit approach: AI-driven development as the primary method, not a supplement.

AI-driven development in practice

The standard AI-in-development model is using it for isolated tasks: write a function, find a bug, explain some code. Impressit's team went further — Claude Code became every engineer's primary development environment, every day.

Using Claude for a small task and building a production-grade platform with Claude are different things. Before writing product code, the team invested in configuring the environment for this specific project:

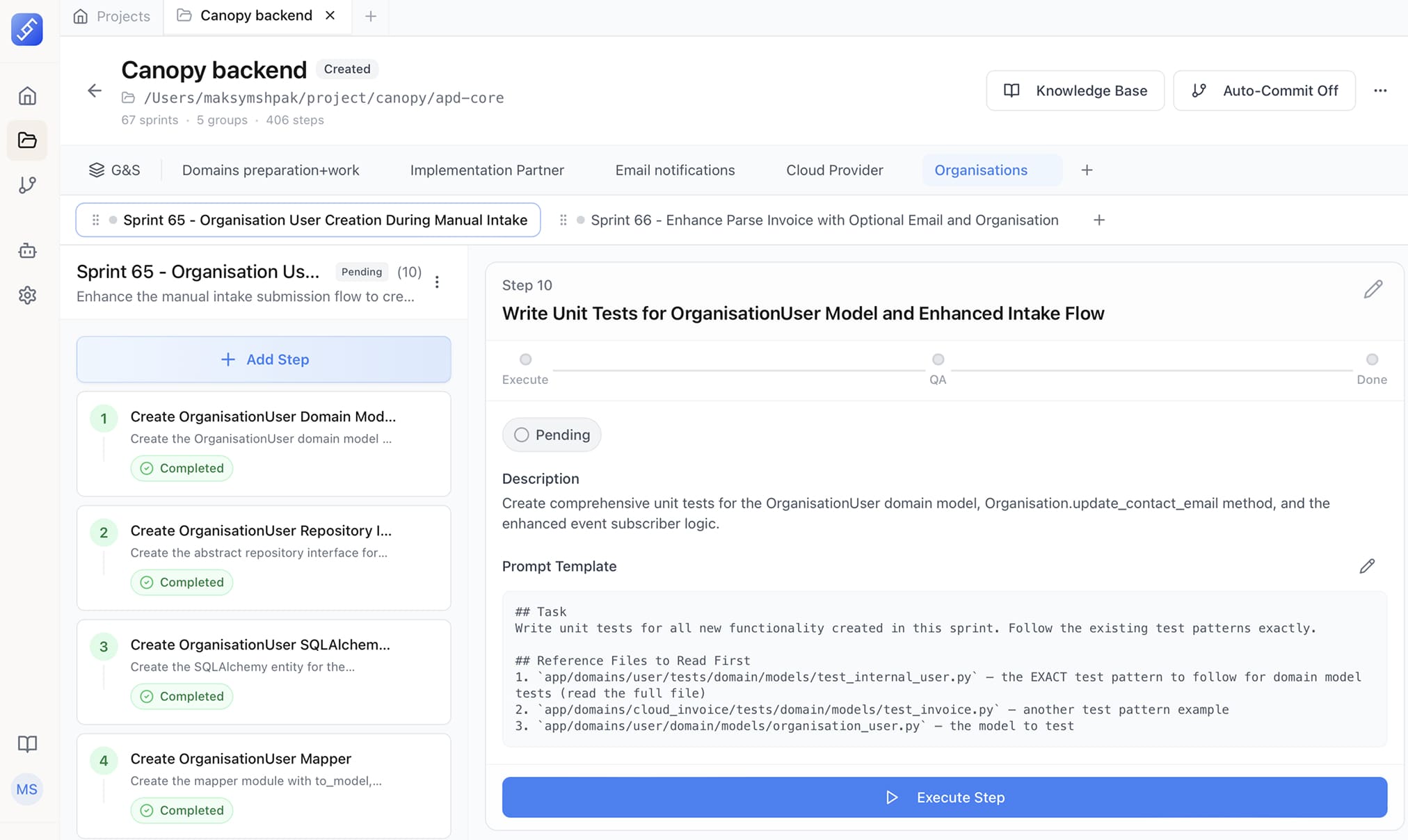

- Rather than prompting Claude directly, the team built agents for each task type. A backend Python Developer agent: pre-loaded with FastAPI knowledge and project conventions. A bugfix agent: defined sequence — diagnose first, then fix. A QA agent: runs after every task and checks compliance with all rules and conventions. Agents are project-specific. The FastAPI agent won't work out of the box on a different Python project. That's intentional — it's a deliberate investment for this context.

- All Claude configurations — agents, skills, conventions — live in the same repo as the codebase, on GitHub. One engineer updates an agent, pushes, and the whole team gets it on the next pull. No separate sync process. Most teams keep AI configs local. This team doesn't.

- One consistent practice: always tell Claude whether you want an analysis or a fix. Claude's default is to solve the problem. If the engineer wants to understand the root cause first, Claude will skip ahead and fix it anyway — unless explicitly told not to. "Don't fix it, explain it" became a standard part of the team's workflow.

- The team deliberately split the AI toolset by task complexity:

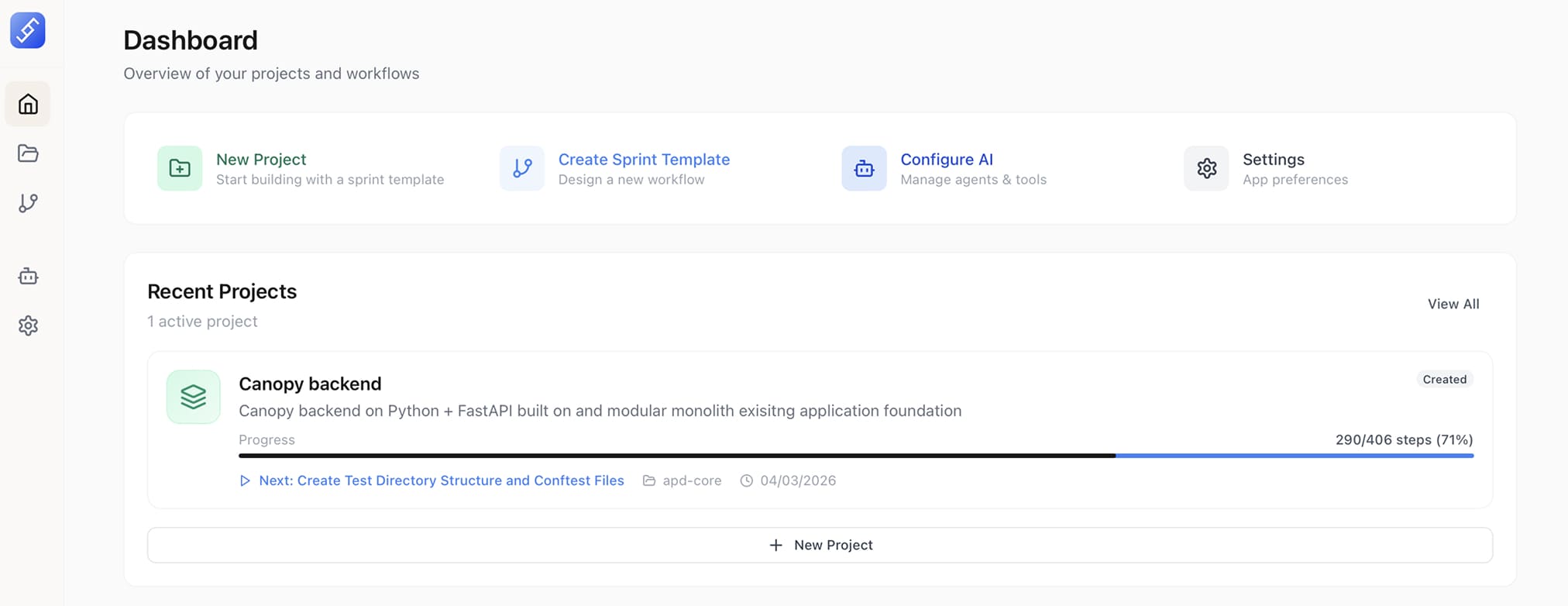

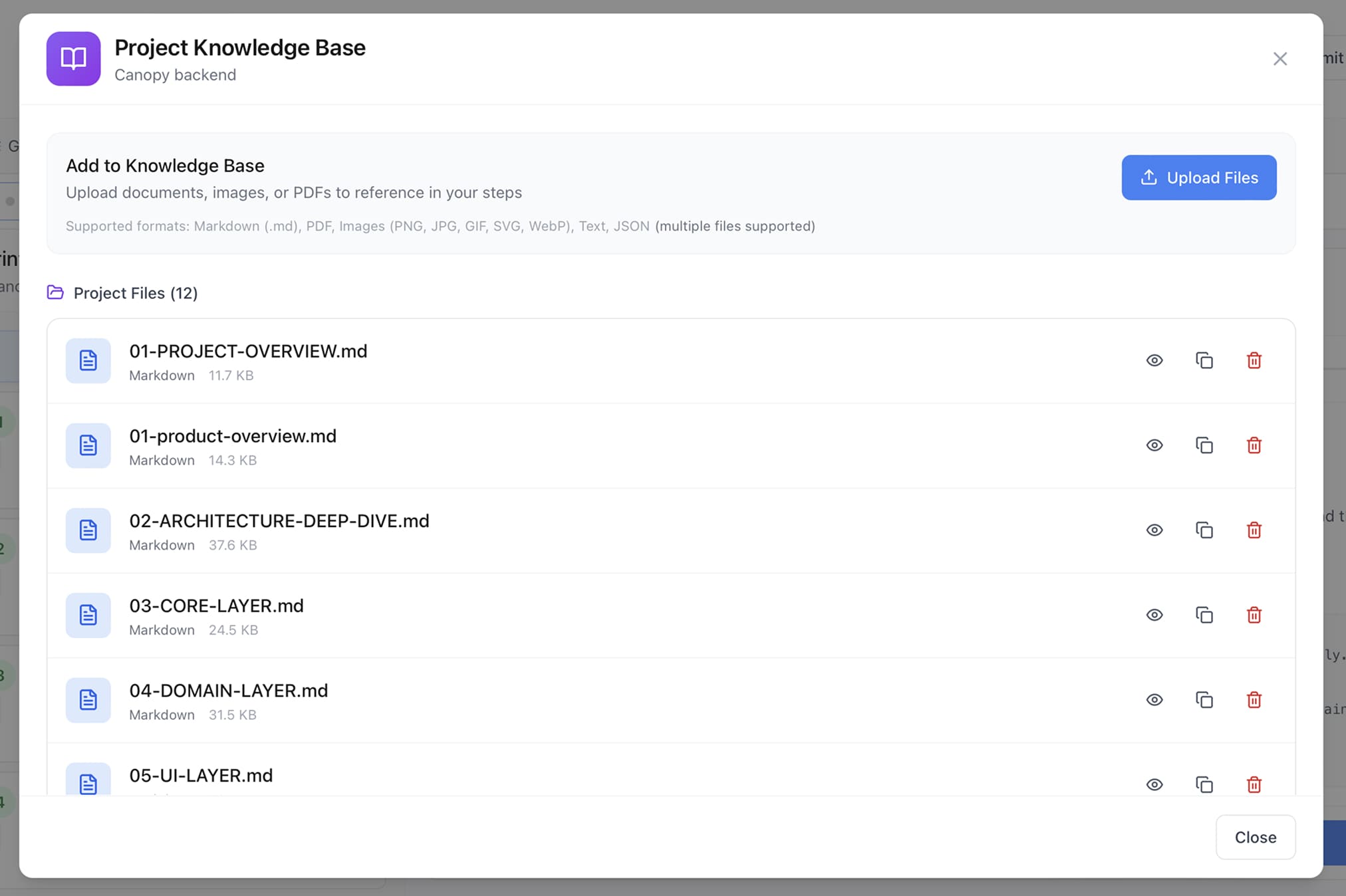

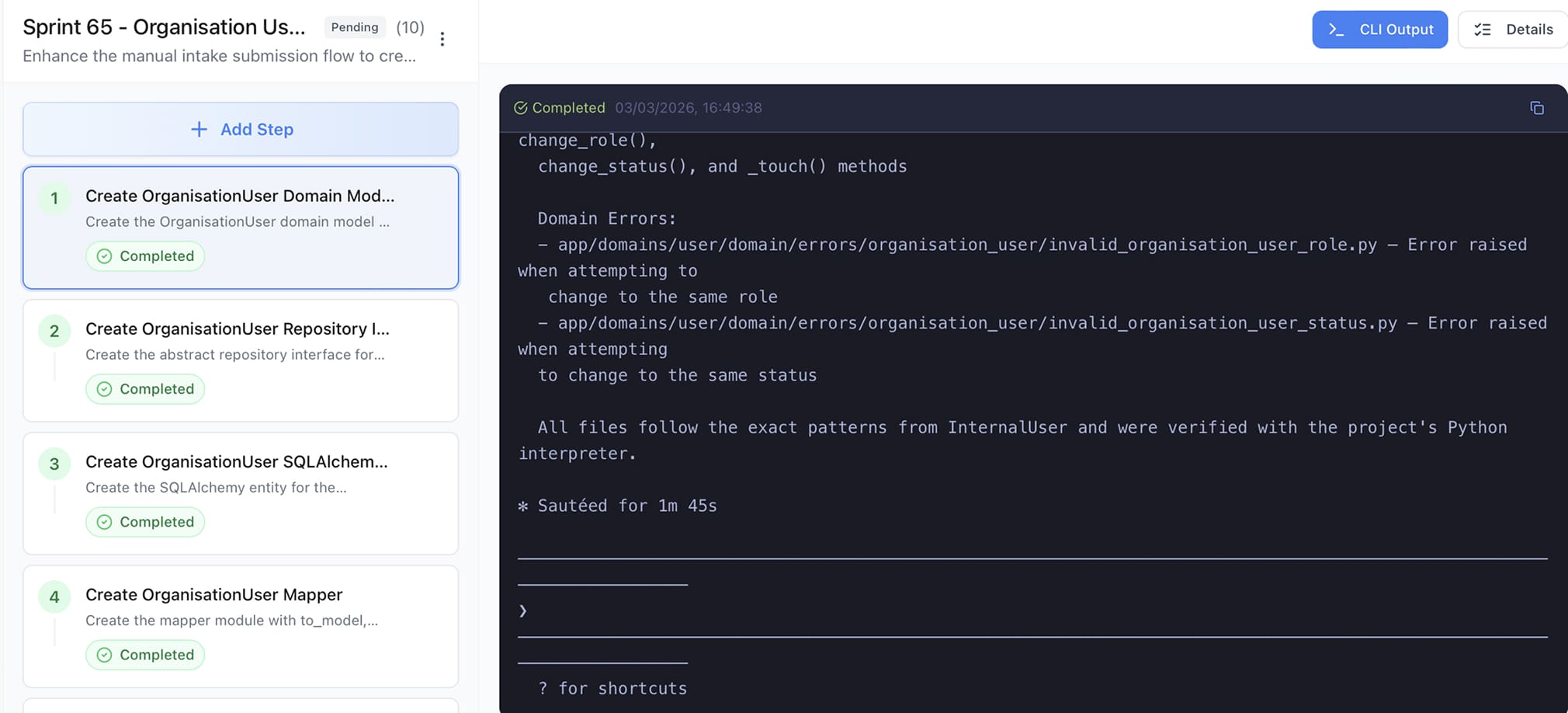

- FlowForge (Impressit's internal tool, integrated with Claude) — for large, multi-step work: architecture design, R&D phases, project scaffolding, documentation generation. FlowForge structures work into sprints and steps with clear dependencies. Each step runs in its own Claude session, preventing context overload on long projects. Each completed step triggers an automatic git commit. A built-in QA phase produces a pass/fail verdict with a full report. FlowForge was used before any product code was written — to build the platform skeleton, establish conventions, and set rules.

- Claude Code directly — for targeted work: features, fixes, components. FlowForge's overhead isn't worth it for small tasks. Speed matters more.

The upfront investment paid off immediately. A task that would take two days conventionally now takes 30–40 minutes.

Process tool integrations

- Atlassian MCP. The team lead provides Claude with a business requirements document and backend technical documentation. Claude generates structured epics and tickets aligned to both. After review, one command pushes everything to Jira with assignments. Requirements and the ticket tracker stay in sync.

Key Decisions

Decision 1: Vertex AI over direct Anthropic API. Canopy holds a substantial Google Cloud credit — allocated as part of their cloud broker partnership with Google. Routing Claude through Vertex AI draws costs from that existing credit rather than requiring a separate Anthropic account. The AI infrastructure is effectively free. Additional benefit: Vertex AI's unified interface allows switching models and providers without rewriting the integration.

Trade-off accepted: additional dependency on Google Cloud — a policy change at Vertex AI would affect Anthropic model access too.

Decision 2: Claude Opus over Sonnet for invoice parsing. Cloud invoices aren't standard documents. They require understanding of service context, not just text extraction — and they vary structurally by provider. On short invoices (up to 5–6 pages), Sonnet and Opus perform comparably. But Canopy's priority is large invoices: 35+ pages, where the highest costs are concentrated. At that scale, Sonnet returns incomplete data. Opus extracts the full service spectrum and structures the output reliably for the matching algorithm. The quality of parsing directly determines the quality of matching. The cost difference is covered by the Google Cloud credit.

Trade-off accepted: higher model cost per call.

Decision 3: AI-driven development as the primary method, not a supplement. The team didn't add Claude as another tool — they rebuilt the development workflow around it. That required deliberate upfront investment before any product code was written: specialized agents for each task type, shared skills, and FlowForge onboarding for complex work. All configurations live in the project's GitHub repository alongside the codebase — one git push, and the whole team has any update on the next pull. Most teams keep AI configs local. This team doesn't.

Trade-off accepted: higher setup cost at the start.

The payoff: a task that would take two days conventionally now takes 30–40 minutes. Project cost cut by half.

Decision 4: FlowForge for complex tasks — solving the AI context problem. On large projects, Claude can lose coherence when the context window becomes overloaded. It's not hallucination — it's degraded focus without structure. FlowForge addresses this structurally: each step runs in its own clean Claude session, with prior artifacts accessible through the knowledge base but not flooding the active context. Every completed step produces an automatic git commit — if Claude makes an error, rollback takes seconds. After each step, a mandatory QA phase issues a pass/fail verdict; on fail, an automatic correction cycle runs before the engineer confirms and moves on.

Trade-off accepted: additional tooling dependency and onboarding time upfront.

Decision 5: Agent configs in the repository. All Claude settings live alongside the codebase, not in a separate system. This standardized team behavior and eliminated the scenario where each engineer maintains a personal AI setup.

Trade-off accepted: requires discipline to keep configs updated — a stale agent can propagate bad conventions team-wide.

Decision 6: QA agent as a mandatory step. A dedicated QA agent checks convention compliance after every task. This reduces errors and removes the need for manual code review on routine checks. In a project without ongoing technical review from the client side, quality is controlled systematically, not by individual engineer attention.

Trade-off accepted: adds time per task cycle. Worth it at project scale.

Results and Business Value

Canopy launched without an engineering team and without a codebase.

The delivery numbers reflect what AI-driven development made possible on a three-person team: platform working in 4 weeks, overall development speed at twice the conventional rate, project cost estimate cut in half. No additional headcount. No extended timeline.

Beyond the metrics, the structural shift matters more: Canopy moved its core business process online for the first time. The platform now serves it — and for enterprise accounts, it compresses the first stage of the sales cycle: initial comparison happens online, before a sales rep gets involved.

Say hello to our team